How Diffusion Models Generate Images: The Math Behind AI Creativity

ai math machine-learning computer-vision

Every digital image, every photo ever taken, every painting, every AI-generated portrait, exists inside an unimaginably large mathematical space.

That might sound philosophical. It’s actually combinatorics.

When you understand how digital images are represented mathematically, you start to see generative AI differently. AI isn’t “drawing.” It’s navigating probability.

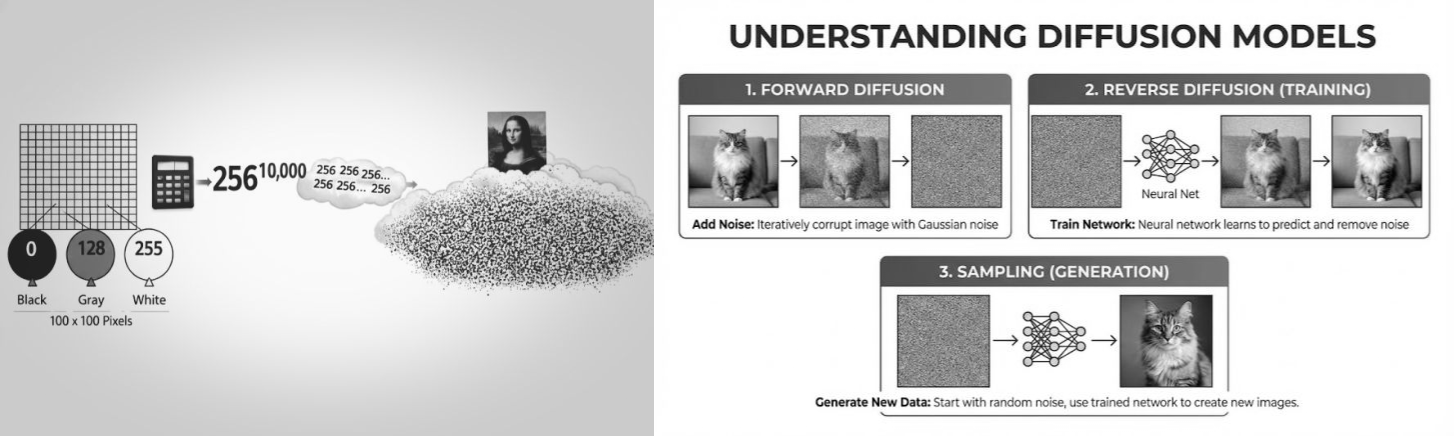

The Mathematics of Pixels

A grayscale digital image is a 2D array of numbers. Each pixel stores a single integer between 0 and 255, where 0 is pure black and 255 is pure white.

A 100 x 100 image is just 10,000 integers in a grid. Nothing more.

Each pixel has 256 possible values. The total number of possible 100 x 100 grayscale images:

256 ^ 10,000

That number has over 24,000 digits. To write it out, you’d fill about 12 pages with nothing but digits.

Most of these combinations are meaningless noise. But mathematically, every possible image, including every photo ever taken and every photo that will ever be taken, exists somewhere in this space.

How Large Is Image Space?

The numbers grow exponentially with resolution and color.

| Image Type | Possible Combinations | Scale |

|---|---|---|

| Grains of sand on Earth | 10^19 | 20 digits |

| Atoms in the observable universe | 10^80 | 81 digits |

| 100x100 grayscale | 256^10,000 | 24,083 digits |

| 100x100 color (RGB) | 256^30,000 | 72,000+ digits |

| 12MP color photo | 256^36,000,000 | 86 million+ digits |

The space of possible images isn’t just larger than the physical universe. It’s larger by a factor that itself has thousands of digits. These aren’t in the same category of largeness.

If every atom in the universe were a supercomputer generating a billion images per second since the Big Bang, they wouldn’t have scratched the surface.

The key insight: structured, meaningful images occupy an unimaginably tiny corner of this space. Almost everything else is noise.

What a Camera Actually Does

This reframes photography in a fundamental way.

A camera doesn’t create an image. The image already existed as a mathematical possibility. What a camera does is select the one specific arrangement of pixel values that corresponds to physical reality at that moment.

It’s a selection mechanism. The physics of light, the lens, the sensor, the analog-to-digital conversion, it’s all an elaborate pipeline for extracting one number sequence from a space of possibilities that was always there.

How Diffusion Models Work

This connects directly to how modern AI generates images.

A diffusion model creates images by learning to reverse a noise process. Instead of searching through all possible pixel combinations, it learns which direction to move from noise toward structure.

During training:

- Take a real image

- Gradually corrupt it with noise across many timesteps

- Train a neural network to predict and reverse each small noise step

The model learns one thing: given a slightly noisy image, how to make it slightly cleaner.

During generation:

- Start from pure random noise

- Apply hundreds of small denoising steps

- Each step nudges the pixels toward the region of image space where realistic images live

The result: a guided walk from a random point in pixel space to a meaningful one.

Why This Approach Works

The space of all possible images is incomprehensibly large. But the space of realistic images, images that contain recognizable objects, coherent lighting, and natural textures, is a tiny, structured manifold within it.

Diffusion models work because they:

- Map the geometry of this manifold from training data

- Avoid brute-force enumeration entirely

- Move incrementally, making small corrections rather than large jumps

- Stay on the manifold of realistic images throughout generation

They don’t explore 256^(W x H) possibilities. They learn the shape of the tiny region where natural images live and navigate directly toward it.

AI Isn’t Drawing. It’s Filtering Probability.

This reframes what generative AI actually does.

It is not randomly generating pixels. It is not searching all combinations. It is not creating from nothing.

It is navigating high-dimensional probability space. Learning statistical structure from real data. Moving step-by-step from chaos toward meaning.

When you type a prompt into Stable Diffusion or DALL-E, you’re giving the model a destination in image space. The model’s job is to find a path from random noise to that destination, guided by everything it learned about what images look like.

Rethinking Creativity

This raises an uncomfortable question. If every possible image already exists mathematically, what does it mean to create something new?

Humans navigate possibility spaces too. We recombine ideas. We filter patterns. We move toward structure through intuition, experience, and taste.

A photographer points a camera and selects one pixel arrangement from all mathematical possibilities. A diffusion model follows learned gradients through noise to reach a different one. The mechanisms are entirely different. The fundamental operation, finding meaningful signal in a space that is almost entirely noise, is the same.

This doesn’t diminish human creativity. It highlights what makes it remarkable: navigating an impossibly large space and consistently finding meaning, using faculties that no brute-force enumeration could replicate.

Frequently Asked Questions

Do diffusion models search all possible images?

No. The space is astronomically large. They learn to move directly toward realistic regions instead of searching.

Why are most pixel combinations just noise?

Structured images are an extremely small subset of all possible pixel arrangements. Random combinations almost never produce recognizable patterns. This is a foundational concept in information theory.

How is this related to computer vision?

Computer vision and image generation are two sides of the same coin. Vision models learn to interpret the structure within images. Generative models learn to produce that structure. Both rely on understanding the geometry of image space.

Are AI models creative?

They learn statistical structure and navigate toward meaningful outputs within a probability landscape. Whether that constitutes creativity depends on your definition, but the underlying mathematical operation is the same one humans perform when making creative decisions.

Final Thought

Every possible image already exists mathematically. Intelligence isn’t about generating pixels.

It’s about knowing which direction to move.

That’s what diffusion models do exceptionally well.